When AI Training Meets “Food Delivery”: Why Legacy Systems Struggle? Now envision an AI-powered “delivery dispatcher” that must excel in two areas:

- Delivering orders quickly (general capability)

- Simultaneously monitoring riders for violations like speeding or running red lights (safety/trustworthiness)

Yet traditional training frameworks face multiple limitations:

- Compartmentalized workflow: Diverse demands (training, inference generation, validation scoring) must be split across separate “stations” handled by different “riders” (clusters/GPUs).

- Rigid architecture: Adding new constraints (e.g., safety/knowledge/value validators) often requires major pipeline overhauls or complete rebuilds.

- Poor scaling: More “riders” (GPUs) paradoxically worsen resource imbalance: some idle while others are overloaded to the point of overheating.

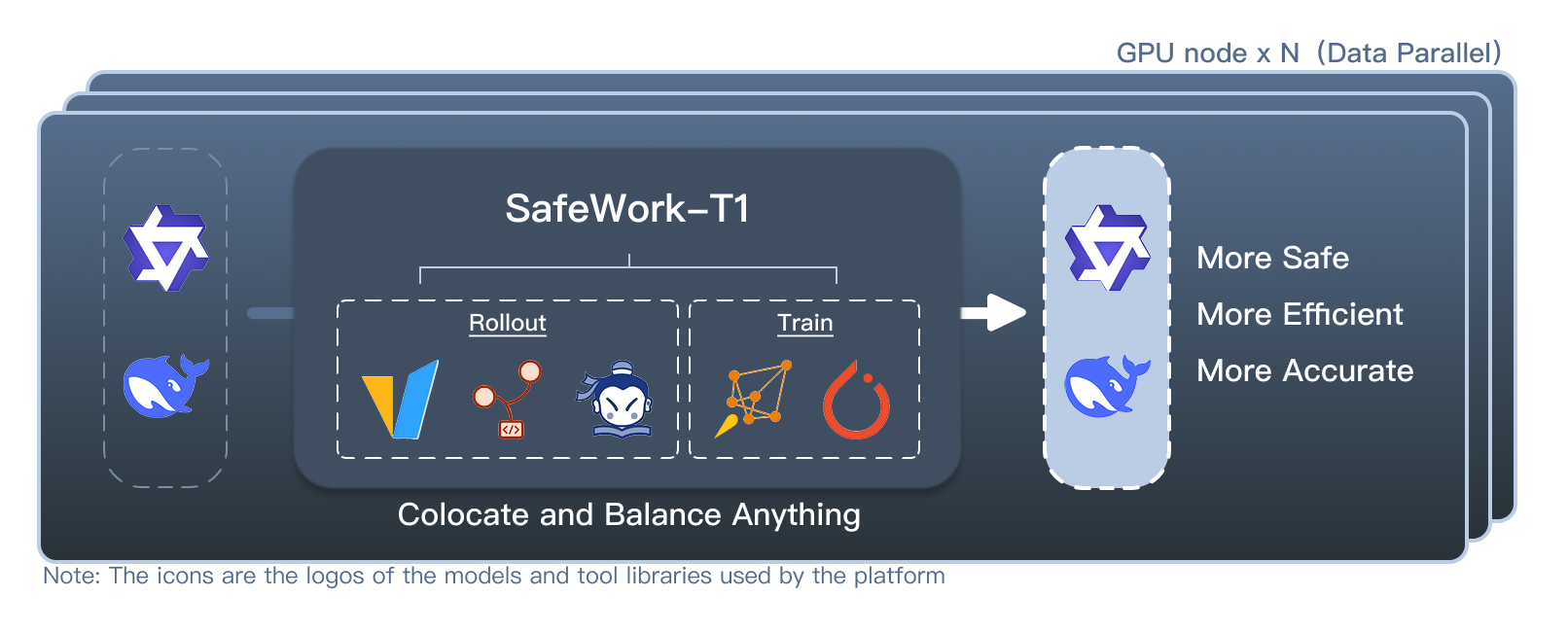

To solve these challenges, Shanghai AI Lab’s Center for Safe&Trustworthy AI introduces SafeWork-T1: a multimodal, trustworthiness-focused training platform. This intelligent system processes tasks in the colocate mechanism like a “collapsible, modular, multi-purpose workbench”—resolving all above pain points at once to enable safer, more efficient, and more accurate trustworthiness-enhanced training paradigms.

🧩Core Designs: Universal Colocate Mechanism

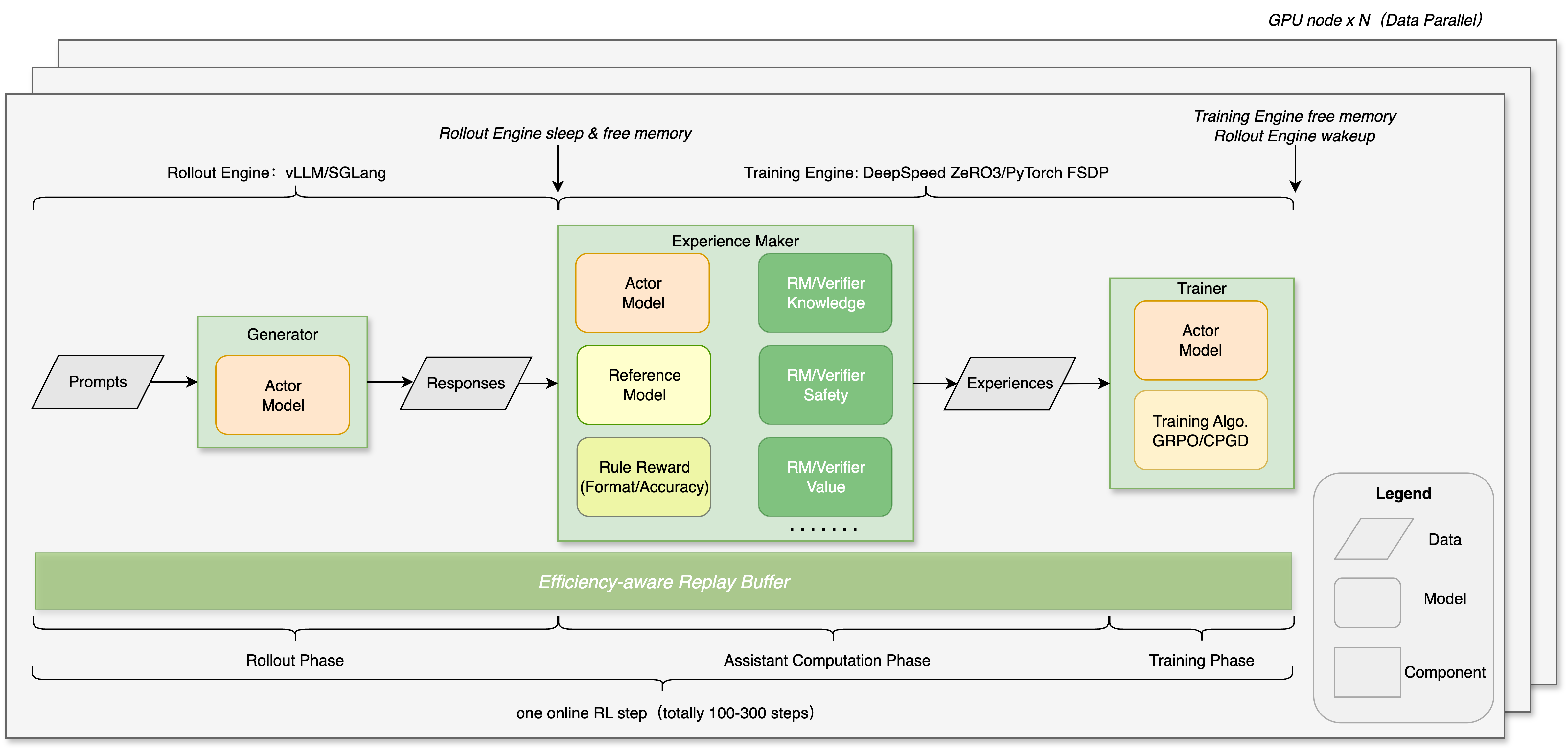

- Multi-Mode colocate: Multi-response generation (rollout), safety checks (verification scoring) and Optimization (training), operate within one hardware system—like chefs, riders, and inspectors seamlessly collaborating in one dispatch hub. This eliminates handover delays, boosting end-to-end efficiency.

- Plug-and-Play Modules: Add safety rules (e.g., “no prohibited items”) or reward mechanisms (e.g., “bonus points for positive reviews”) without rebuilding—like swapping LEGO wheels or steering modules on the fly. Adapts swiftly to evolving requirements.

- Efficient Mode Switching:

Transition instantly between training → rollout → verification scoring modes—akin to F1 pit stops without shutting down the engine. Combined with flexible data routing and model-sharing, this minimizes redundant resource allocation and switching overhead, keeping the system at peak efficiency while reducing total training time.

⚖️ Intelligent Scheduling: Dynamic Load Balancing

For large-scale mixed tasks (e.g., processing text/images/video/audio of varying lengths):

- Smart Task Pre-Sorting: Like a parcel sorting system, it groups tasks by text length or multimodal complexity (e.g., image resolution) to balance GPU loads—preventing scenarios where some GPUs are “overloaded to choking” while others “idle in vain”.

- Adaptive Computation Strategies:

- Automatically discard or optimize workflows for abnormal data (e.g., skipping invalid dialogues).

- Dynamically adjust task computation and communication loads based on device capacity.

- Prioritize high-value samples via a central task pool.

💡Practical Impact: Optimizing Efficiency & Usability

Versus traditional solutions, SafeWork-T1 delivers:

- Efficiency Boost: Multimodal reinforcement learning tasks show significant speed gains.

- Seamless Upgrades: Development efficiency for new safety rules/knowledge integration improves by multiples.

- Effortless Scaling: Maintains high-performance operation even on thousand-GPU clusters.

✨ Technical Highlights

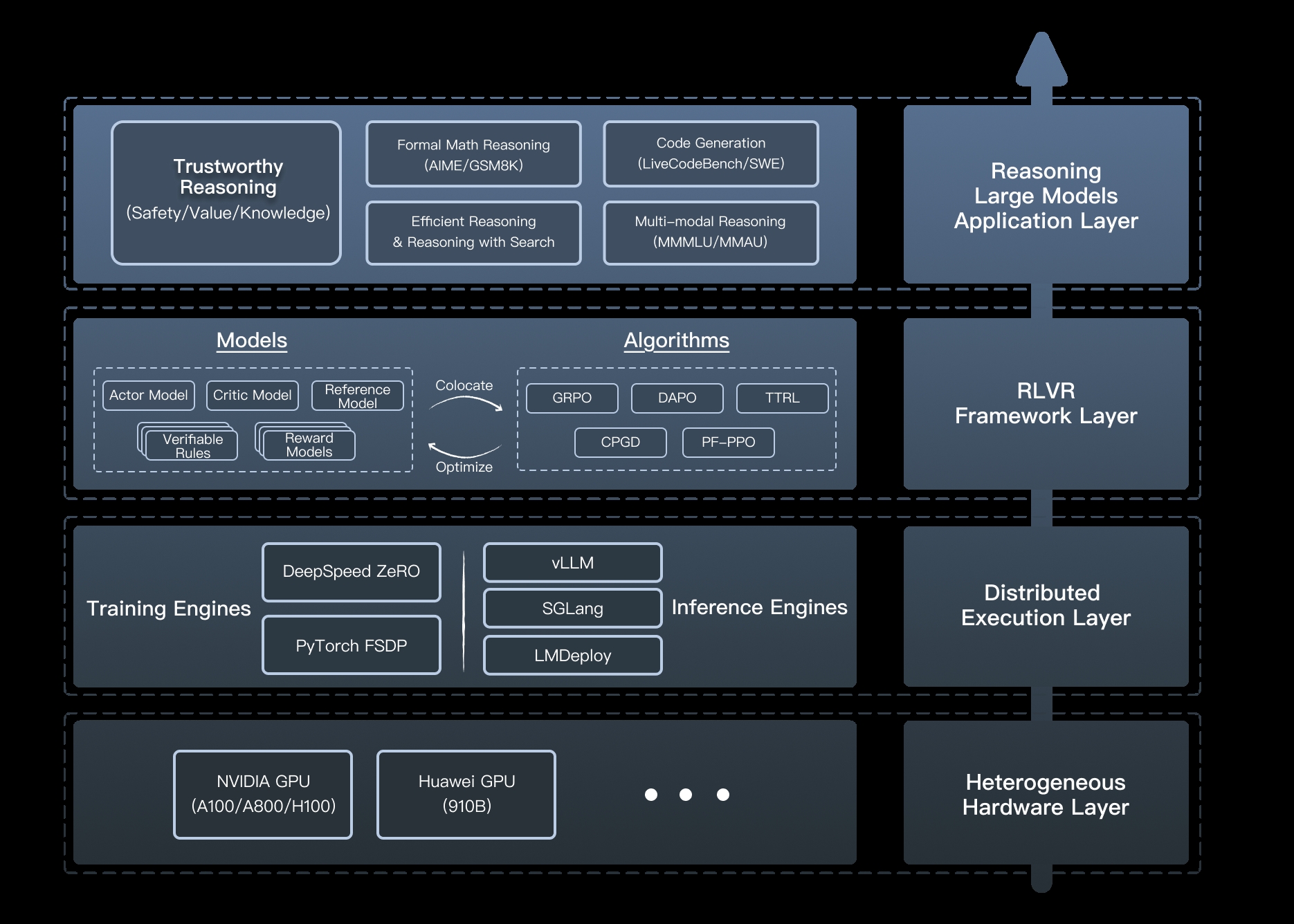

Through system innovations like Colocate Anything and Balance Anything, SafeWork-T1’s layered architecture (as is shown in above figure) achieves the industry’s unified solution for:

Through system innovations like Colocate Anything and Balance Anything, SafeWork-T1’s layered architecture (as is shown in above figure) achieves the industry’s unified solution for:

- Rigorous safety hardening (knowledge/value alignment)

- Industrial-scale training efficiency

- Flexible rule adaptation

This infrastructure empowers researchers/engineers to focus on “making models smarter” rather than “keeping systems running”. Core components will be open-sourced soon, inviting the community to jointly build a simple, efficient, user-friendly AI safety infrastructure ecosystem.